AI labs often warn about AI model distillation and model extraction (also called “model stealing”). This is not just “trade secret paranoia.” It is a real risk when a powerful model is offered through an API (a way for apps to talk to a service).

Here’s the basic idea. If you can call an API, you can send lots of prompts very fast. You can save the answers. Then you can use those prompt → answer pairs to train a new model that acts similar.

Security researchers have shown this can work against real prediction APIs, including in Tramèr et al. (2016) and Orekondy et al. (2019).

Important accuracy note: An earlier draft of this post claimed Anthropic publicly accused specific Chinese AI companies of running a large Claude “distillation” campaign, with exact numbers for fake accounts and total requests. I could not verify those company names or those stats in reliable public sources. So those claims were removed.

Headline fit note: The current headline suggests a confirmed “caught stealing” case tied to named companies. This post does not claim that. What it does cover is the general risk of extraction, why it matters, and how providers try to reduce it.

Here’s what distillation and extraction attacks often look like, and why they matter for anyone using AI tools.

The Mechanics of a Distillation/Extraction Attack

Model distillation is a normal technique. You train a smaller “student” model to copy a bigger “teacher” model. Teams do this to cut cost and get faster replies (lower latency, meaning less waiting time).

A classic distillation paper is Hinton, Vinyals & Dean (2015).

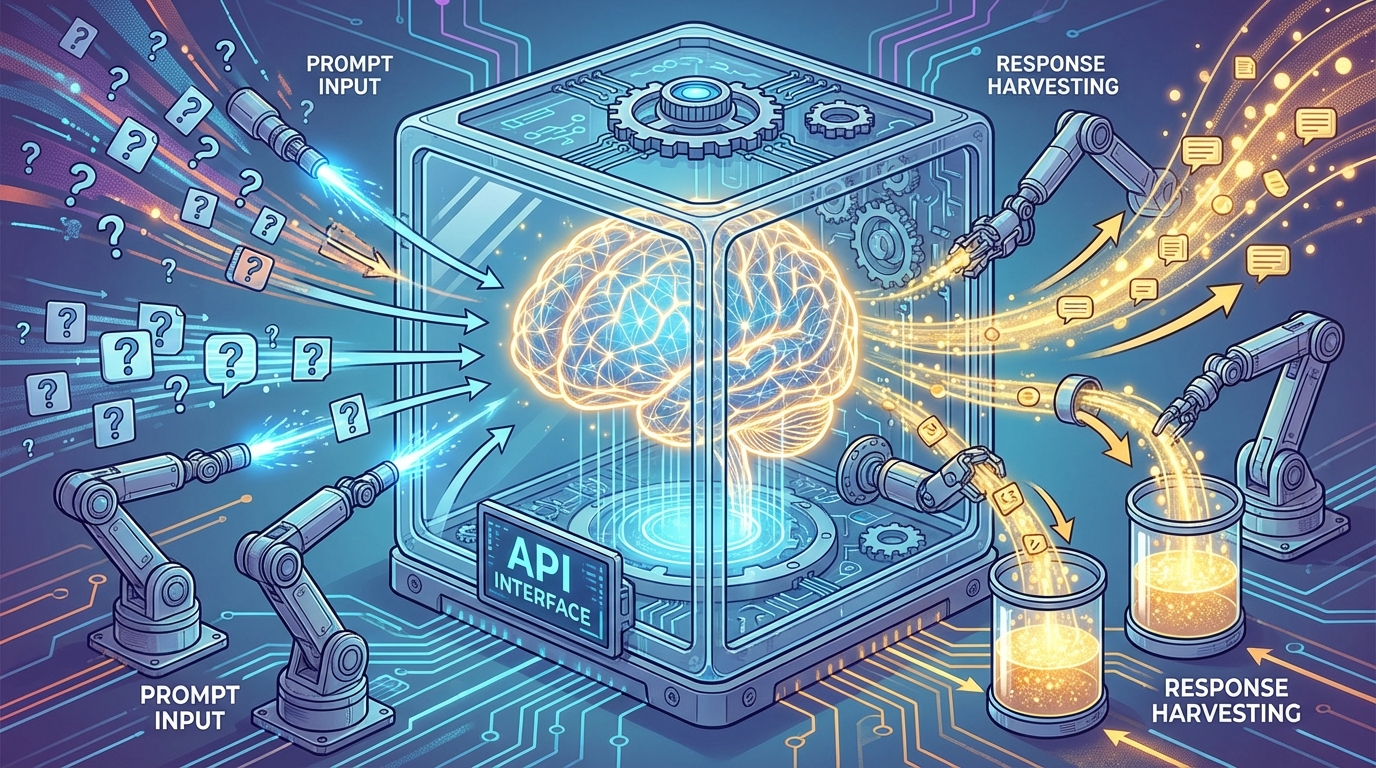

It becomes abuse when someone tries to copy a hosted model without permission. A common path is massive automated API calling.

In security research, this overlaps with model extraction. That is when an attacker uses black-box access (you can ask questions, but you can’t see the model’s inside) to build a “copycat” model. This has been shown in many settings, including Tramèr et al. (2016) and Orekondy et al. (2019) (“Knockoff Nets”).

At a high level, an extraction campaign often includes:

- Account/API key scaling: using many accounts or keys to send more requests and dodge rate limits (caps on how fast you can call an API).

- Systematic prompt design: asking lots of similar prompts to test one skill again and again (like code style, output format, or tool-call shapes).

- Dataset building: saving prompt → response pairs, then training the “student” model on them (often via supervised fine-tuning, which means “learn from examples”).

Providers also try to stop this using contracts and policy. For example, Anthropic’s Terms of Service describe rules for using their services and restrictions on misuse.

Why This Can Work (and What It Can and Can’t Copy)

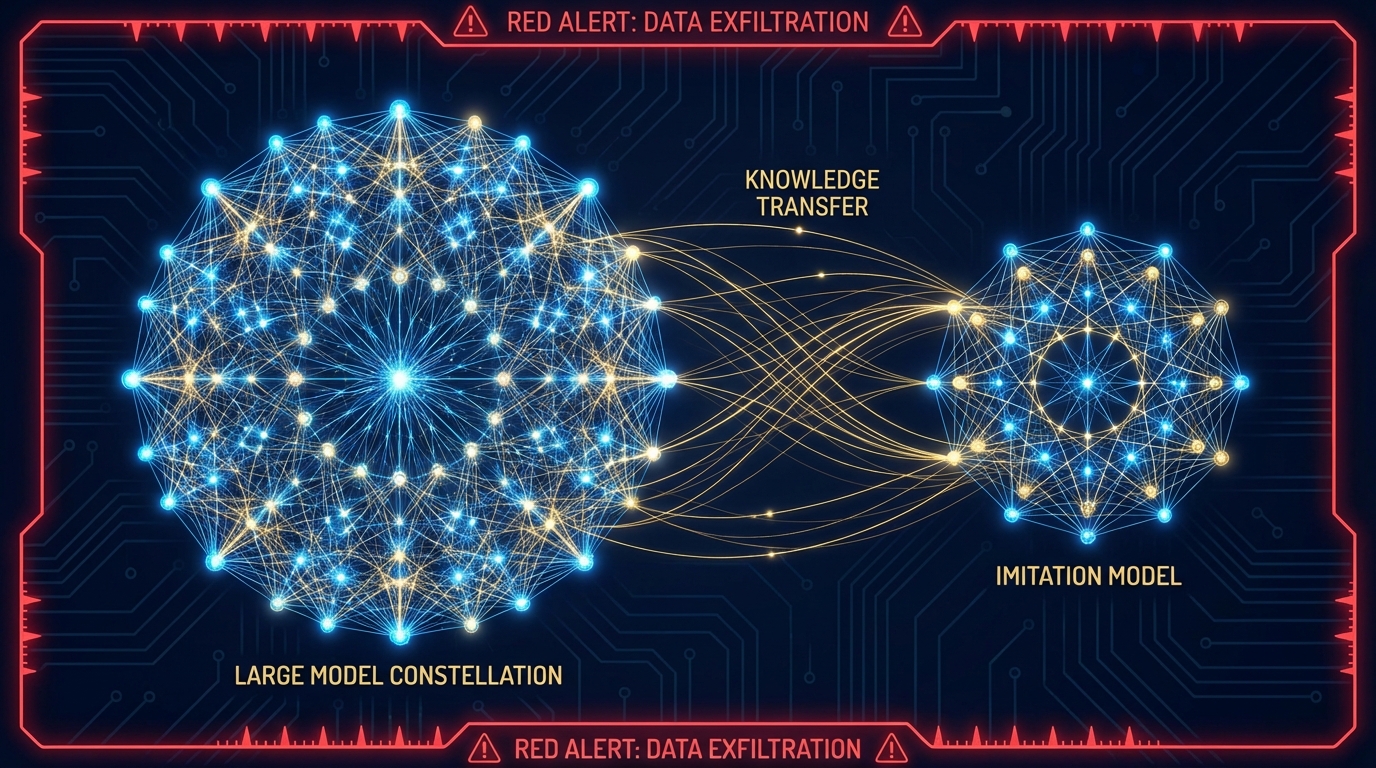

Extraction and unauthorized distillation can create useful copies of some behaviors. This is often easiest when the attacker targets one narrow skill.

For example, they might try to copy a certain output format, a writing voice, or a coding niche. Research shows that black-box querying can copy meaningful functionality, depending on budget and access, including in Orekondy et al. (2019).

But there are limits. A substitute model trained only on API outputs:

- May copy surface behavior (how answers look) without matching real reliability.

- Will not automatically include the original provider’s ops layer (monitoring, abuse detection, policy checks, and incident response).

- May fail more on edge cases, because the attacker’s data misses many real-world situations.

There is a real trade-off here. The more helpful and detailed a model is, the more “training signal” it can give to someone who collects outputs at scale.

The Real Cost: Incentives and Safety

Why does this matter beyond company competition?

1) Incentives: If strong model behavior can be copied cheaply from API access, it can reduce the reward for doing expensive original work. That includes data work, training runs, testing, and safety research. It can also push labs to tighten access or add more controls.

2) Safety doesn’t automatically transfer: A lot of “helpful and safe” behavior comes from extra training after pretraining. One common method is RLHF (reinforcement learning from human feedback, meaning people rate answers and the model learns from those ratings). A widely cited reference is Ouyang et al. (2022).

A copier may focus on raw capability and not match the same safety behavior. They also may not copy the safety systems around the model (like monitoring and abuse response).

3) Trust and transparency trade-offs: The more a lab shares about how a model works, the easier it can be for attackers to plan extraction. This is one reason risk guides stress planning, controls, and monitoring, like the NIST AI Risk Management Framework (AI RMF 1.0).

How Providers Detect and Mitigate Extraction Attempts

Providers often look for patterns that do not match normal human use. Common signals include:

- High-volume, regular traffic: a steady machine-like rhythm.

- Probing prompts: lots of prompts meant to “map” the model’s behavior.

- Odd account or key patterns: many new accounts, frequent key rotation, or linked metadata.

- Coordinated bursts: many IPs sending similar traffic at the same time.

Common defenses include:

- Rate limits and enforcement: slowing traffic, blocking keys, or adding stronger identity/payment checks.

- Output controls: safety filters and rules that limit certain risky outputs.

- Watermarking research: adding hidden patterns to text to help detect AI-written output. This is mostly about tracing and provenance (where content came from). It is not a full shield against extraction. See Kirchenbauer et al. (2023).

What This Means for You

If you use AI tools often, here are the practical takeaways:

- Safety can vary a lot: Two models can look similar, but act very differently on risky requests. They can also differ in how much behind-the-scenes abuse monitoring exists.

- Access and pricing pressure: If extraction attempts rise, providers may tighten access, lower default rate limits, add more checks, or adjust pricing.

- More friction for good automation: Some defenses can slow down honest high-volume users (like teams running tests or workflows).

Where We Go From Here

Unauthorized distillation and model extraction are not new. Research shows that black-box access can be enough to copy useful functionality in some settings, like in Tramèr et al. (2016) and Orekondy et al. (2019).

The big questions for the next phase are:

- How to keep broad access to strong models while lowering extraction risk.

- How providers can share threat signals without giving attackers a playbook.

- How contracts and policy should treat large-scale automated “capability copying.”

Distillation will stay a key optimization tool. The hard part is drawing—and enforcing—the line between normal distillation and unauthorized extraction.

Sources

- Tramèr et al. (2016), Early, widely cited work showing model extraction via prediction APIs

- Orekondy et al. (2019) (“Knockoff Nets”), Black-box model stealing using query-and-train methods

- Hinton, Vinyals & Dean (2015), Knowledge distillation basics

- Anthropic Terms of Service, Example of provider rules and misuse restrictions

- Ouyang et al. (2022), RLHF approach for instruction-following models

- NIST AI RMF 1.0, Guidance on AI risk management and controls

- Kirchenbauer et al. (2023), LLM text watermarking research

- NISTIR 8269 (2020), NIST taxonomy that includes model extraction as an adversarial ML risk

TL;DR

- AI model distillation is a normal way to make models smaller and cheaper, but it becomes abuse when done without permission using massive API calls.

- Model extraction (model stealing) can work with black-box access, as shown in published research.

- A copycat model may imitate outputs, but it won’t automatically copy the provider’s safety training, monitoring, and abuse response.

- Labs fight extraction with rate limits, account checks, traffic monitoring, and output controls (plus research like watermarking).

- This post does not verify a public Anthropic claim naming Chinese companies or giving specific numbers for a Claude theft campaign.

FAQ

What is AI model distillation?

It is when you train a smaller model to copy a bigger model. People do it to make AI cheaper and faster.

What is model extraction (model stealing)?

It is when someone uses an API like a “question machine.” They ask tons of questions, save the answers, and train a new model to respond in similar ways.

Can someone fully copy a model through an API?

Not perfectly. They may copy some skills or styles, especially narrow ones. But they often miss edge cases, and they don’t get the provider’s behind-the-scenes safety and monitoring systems.

How do AI companies try to stop extraction?

They use rate limits, stronger account checks, and monitoring for unusual traffic. They can also use safety filters and other output controls.

Did Anthropic publicly name Chinese companies stealing Claude in a verified report?

Not in this article. The earlier draft had names and numbers that could not be verified in reliable public sources, so they were removed.

TL;DR

- AI model distillation is normal, but it turns into abuse when someone copies a hosted model without permission using huge numbers of API calls.

- Model extraction (model stealing) is a real, studied attack and has been shown to work against black-box prediction APIs in published research.

- A copycat model can mimic how answers look, but it usually won’t match reliability, edge-case coverage, or the provider’s safety and monitoring systems.

- Providers try to spot extraction using traffic patterns (volume, probing prompts, account behavior) and stop it with rate limits, enforcement, and output controls.

- This article does not verify any public Anthropic accusation naming Chinese companies or listing specific request/account numbers for a Claude theft campaign.

FAQ

What does “AI model distillation” mean in plain English?

It means training a smaller model to copy a bigger model. The goal is usually lower cost and faster answers.

What is model extraction (model stealing)?

It means using an API to ask many questions, saving the answers, and training a new model on those examples to behave similarly.

Is model extraction only a theory, or has it been proven?

It has been shown in research. For example, published work like Tramèr et al. (2016) and Orekondy et al. (2019) demonstrates black-box model stealing methods.

If someone copies outputs, do they also copy safety?

Not automatically. They might copy some “helpful” style, but they usually do not copy the original lab’s safety training and the monitoring systems around the model.

Did this article confirm Anthropic caught named Chinese companies stealing Claude?

No. The earlier draft included names and numbers that could not be verified in reliable public sources, so they were removed.